Incentives to adopt open science practices in your daily research

by the Open Science initiative at the Max-Planck-Institute for Human Cognitive and Brain Sciences

Introduction

“Open Science” (OS) is an umbrella term for different research practices, which aim to increase openness, transparency, rigor, reproducibility and replicability of the scientific process (Crüwell et al., 2019). These practices promote reproducibility and replicability at all stages of a research project and thereby enable researchers to do credible science (Arza and Fressoli, 2017; Munafò et al., 2017).

In the following, we (the OS initiative at the Max-Planck-Institute for Human Cognitive and Brain Sciences) have collected short, simple definitions of common OS practices along with arguments for their implementation, at our Institute and beyond. We focused particularly on the various ways in which open science practices can benefit individual researchers, including you.

General benefits of OS

-

saves resources by conducting reproducible studies. For more information, see our resource document on how to create a reproducible workflow.

-

helps you to avoid errors and perform correct analysis

-

enables you to re-use materials and analysis scripts for new studies

-

helps you to reproduce your own results from previous studies (Lowndes et al., 2017)

-

makes it easier for new colleagues to be integrated in your research projects

-

enables you and others to keep working on your project even if you leave your laboratory

-

Benefits of open data, materials and open code

Open data, materials and code allow other researchers to re-perform analysis and check the reproducibility of a given study. This is fundamental for detecting errors and biases in studies and basing science on verifiability, not trust (Klein et al., 2018). Publishing data, materials and code relies on a well-organized and transparent data structure, scripted analysis code and auxiliary files (such as Readme files and standard operation procedures).

TRANSPARENCY in your data structure and analysis scripts

- saves resources by conducting reproducible studies. For more information, see our resource document on how to create a reproducible workflow.

- helps you to avoid errors and perform correct analysis

OPEN CODE

-

will benefit other scientists (and eventually you) by saving time and resources (especially relevant for neuroscience (Eglen et al., 2017))

-

helps to engage the community with your science (Barnes, 2010)

OPEN DATA

-

enhances the credibility in your research (Klein et al., 2018)

-

boosts efficiency of scientific discovery: all research products from the study can be reused (Klein et al., 2018)

-

allows you to publish in certain journals with mandatory open data sharing policy (such as Cognition, Science, PLOS, see here)

-

gives you a citation benefit (Piwowar and Vision, 2013)

-

protects you against data loss

-

can be a publication on its own (in form of a data paper or when getting a digital object identifier (DOI) from a sharing platform)

-

in turn, openly available datasets allow you to quickly increase the power of your own studies or perform replication analysis (Choudhury et al., 2014; Walport and Brest, 2011)

-

Benefits of publishing your research in the form of preprints, postprints and open access

Preprints and postprints are two forms of eprints: earlier versions of the manuscript, preceding official publication in a scientific journal (Harnad, 2003, Tennant et al. 2018).

Preprints are scientific manuscripts, which are publicly shared before they have been peer-reviewed. They can be given a DOI number at this stage, and can thus be cited.

Postprints are articles that have already been peer-reviewed and accepted for publication, but have not been formatted by the publisher yet. Many journals allow a publication in this form, although they differ in restrictions they impose on authors, i.e. embargo period after paper release, when it is not allowed to publish the postprint . Journals policies can be conveniently checked for example in the SHERPA ROMEO repository. Publication in a form of postprint can guarantee free access to the paper content to all interested researchers, which is particularly useful if the target journal charges a fee for reading, which not all readers can afford.

Publishing open access (OA) is a mode of publication in scientific journals that guarantees free access to research articles without paywalls. Journals differ in the degree of open access implementation: some publish open access by default (gold OA), some allow self-archiving and postprints (green OA), while other journals only offer an OA option and publish other articles behind a paywall (hybrid OA). Most Gold OA journals require payment of Article Processing Charges (APCs), to replace subscription charges and finance publishing. These are usually covered by the institutions. The Max Planck digital library covers APCs for Max Planck researchers for many journals (for a list, see here and here). Some journals also offer Gold OA free of charge to the researcher and instiution. These journals are often referred to as Platinum OA (e.g. Biolinguistics).

PUBLISHING PREPRINTS

-

Increases chances during applications for funding or new position. Grant agencies (e.g. The DFG) often require the possibility to read applicant’s publications, which is possible in case of preprints but not in case of submitted papers and papers under review, and therefore the latter are often not taken into account

-

Increases citation number: on average, papers that were preceded by preprints are cited more frequently than papers that were not (Abdill and Blekhman, 2019; Fraser et al., 2019))

-

Assures quicker dissemination of the research

-

Gives you an opportunity to obtain feedback from voluntary reviewers before the manuscript is published (Maggio et al., 2018; Sarabipour et al., 2019)

-

Pre-print documents the date, when your discovery was published

-

Is explicitly encouraged by some journals (e.g. eLife) offering preprint review and scoop protection (i.e. if a manuscript with a similar scope has been published after your preprint submission, it will not be a reason for rejection)

POSTPRINTS AND OPEN ACCESS PUBLISHING

-

Increase the visibility of research by granting the accessibility to the manuscript to potentially unlimited group of readers

-

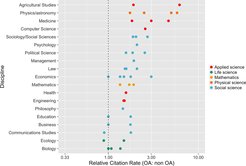

Increase citations in comparison with non-OA papers (Hajjem et al., 2006; Piwowar and Vision, 2013; Wang et al., 2015)

-

Eventually translate into savings for your research institutions (if all papers are available OA): universities do not have to buy back their own work; tax-funded research is accessible for those who paid for it

-

Support equality in the research community – research is available to all researchers regardless of their own financial resources or those of their research institution or their country

Figure 1: Open access articles are cited more frequently than non open access publications. Figure depicts combined results of multiple studies, measuring relative citation rate of OA to non-OA papers, which is a ratio of mean citation rate of OA to mean citation rate of non-OA studies. Multiple points for the same discipline denote various estimates reported in the same study or several different studies.

-

Benefits of preregistrations and registered reports

Preregistration is a procedure of registering parameters of the research (mainly the hypotheses and analysis plan) on dedicated platforms. It aims to prevent generating hypotheses post-hoc - after the data are already collected - and changing analytical decisions depending on the results, a practice that has greatly contributed to the replication crisis (Simmons et al., 2011). Preregistration also enables tracking back potential changes in planned steps and their justifications, making the process transparent and plausible to the readers.

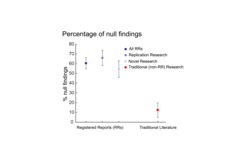

Registered reports extend the concept of preregistration. They are scientific articles chosen for publication based on their detailed research plan submitted prior to data collection. The proposals are peer reviewed twice: 1) before the start of the experiment, when the introduction and methods sections are evaluated and changes are suggested, 2) after the experiment is finished, to assess the researchers’ compliance to the accepted proposal and the quality of the discussion.

Figure 2: Registered reports act against publication biasby publication of true null findings. Figure depicts percentages of null findings among RRs aiming at replication (n = 153) or testing novel hypotheses (n = 143) and proportion of null findings that have been previously reported for traditional (non-RR) literature and their respective 95% confidence intervals.

PREREGISTRATION

-

Helps you to formulate specific hypotheses and develop a concrete timeline for your project

-

Presents an opportunity to get feedback and to discover potential flaws in your study design before data collection

-

Is supported or even requested by an increasing number of journals in our field: e.g. Psychological Science, Nature Human Behavior

-

Protects you from reviewers pressuring you to change hypotheses post-hoc (Wagenmakers and Dutilh, 2016)

-

Presents an opportunity to act against publication bias, because pre-registered studies are more likely to report null findings compared to the general scientific literature (Allen and Mehler, 2019)

-

Increases credibility of the research outcomes (Nosek et al., 2018)

REGISTERED REPORT

-

In addition to the above mentioned advantages of preregistration, registered reports give you the certainty of publication regardless of the result and decrease the pressure to “produce” positive results.

For detailed information and resources on preregistration and registered reports please have a look to our Resources for Preregistration and Registered Reports.

References

Abdill, RJ, Blekhman, R. (2019) Tracking the popularity and outcomes of all bioRxiv preprints. eLife, 8:e45133.

Allen, C, Mehler, DMA. (2019) Open science challenges, benefits and tips in early career and beyond. PLoS Biol., 17:e3000246.

Arza, V, Fressoli, M. (2017) Systematizing benefits of open science practices. Information Services & Use, 37:463-474.

Barnes, N. (2010) Publish your computer code: it is good enough. Nature, 467:753-753.

Choudhury, S, Fishman, JR, McGowan, ML, Juengst, ET. (2014) Big data, open science and the brain: lessons learned from genomics. Front. Hum. Neurosci., 8:239.

Crüwell, S, van Doorn, J, Etz, A, Makel, MC, Moshontz, H, Niebaum, J, Orben, A, Parsons, S, Schulte-Mecklenbeck, M. (2019) 7 Easy Steps to Open Science: An Annotated Reading List.

Eglen, SJ, Marwick, B, Halchenko, YO, Hanke, M, Sufi, S, Gleeson, P, Silver, RA, Davison, AP, Lanyon, L, Abrams, M, Wachtler, T, Willshaw, DJ, Pouzat, C, Poline, J-B. (2017) Toward standard practices for sharing computer code and programs in neuroscience. Nature neuroscience, 20:770-773.

Fraser, N, Momeni, F, Mayr, P, Peters, I. (2019) The effect of bioRxiv preprints on citations and altmetrics. bioRxiv:673665.

Gervais, WM, Jewell, JA, Najle, MB, Ng, BKL. (2015) A powerful nudge? Presenting calculable consequences of underpowered research shifts incentives toward adequately powered designs. Soc. Psychol. Personal. Sci., 6:847-854.

Hajjem, C, Harnad, S, Gingras, Y. (2006) Ten-year cross-disciplinary comparison of the growth of open access and how it increases research citation impact. arXiv preprint cs/0606079.

Klein, O, Hardwicke, TE, Aust, F, Breuer, J, Danielsson, H, Hofelich Mohr, A, Ijzerman, H, Nilsonne, G, Vanpaemel, W, Frank, MC. (2018) A practical guide for transparency in psychological science. Collabra: Psychology, 4:1-15.

Lowndes, JSS, Best, BD, Scarborough, C, Afflerbach, JC, Frazier, MR, O’Hara, CC, Jiang, N, Halpern, BS. (2017) Our path to better science in less time using open data science tools. Nature Ecology & Evolution, 1:0160.

Maggio, LA, Artino Jr, AR, Driessen, EW. (2018) Preprints: Facilitating early discovery, access, and feedback. Perspectives on Medical Education, 7:287-289.

McKiernan, EC, Bourne, PE, Brown, CT, Buck, S, Kenall, A, Lin, J, McDougall, D, Nosek, BA, Ram, K, Soderberg, CK. (2016) Point of view: How open science helps researchers succeed. Elife, 5:e16800.

Munafò, MR, Nosek, BA, Bishop, DVM, Button, KS, Chambers, CD, Du Sert, NP, Simonsohn, U, Wagenmakers, E-J, Ware, JJ, Ioannidis, JPA. (2017) A manifesto for reproducible science. Nature human behaviour, 1:1-9.

Nosek, BA, Ebersole, CR, DeHaven, AC, Mellor, DT. (2018) The preregistration revolution. Proceedings of the National Academy of Sciences, 115:2600-2606.

Piwowar, HA, Vision, TJ. (2013) Data reuse and the open data citation advantage. PeerJ, 1:e175.

Poldrack, RA. (2019) The Costs of Reproducibility. Neuron, 101:11-14.

Sarabipour, S, Debat, HJ, Emmott, E, Burgess, SJ, Schwessinger, B, Hensel, Z. (2019) On the value of preprints: An early career researcher perspective. PLoS Biol., 17:e3000151.

Simmons, J. P., Nelson, L. D., & Simonsohn, U. (2011). False-positive psychology: Undisclosed flexibility in data collection and analysis allows presenting anything as significant. Psychological science, 22(11), 1359-1366.

Wagenmakers, E-J, Dutilh, G. (2016) Seven selfish reasons for preregistration. APS Observer, 29.

Walport, M, Brest, P. (2011) Sharing research data to improve public health. The Lancet, 377:537-539.

Wang, X, Liu, C, Mao, W, Fang, Z. (2015) The open access advantage considering citation, article usage and social media attention. Scientometrics, 103:555-564.